Part 2 | Python | Training Word Embeddings | Word2Vec |

Vložit

- čas přidán 27. 07. 2024

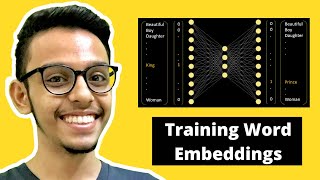

- In this video, we will about training word embeddings by writing a python code. So we will write a python code to train word embeddings. To train word embeddings, we need to solve a fake problem. This problem is something that we do not care about. What we care about is the weights that are obtained after training the model. These weights are extracted and they act as word embeddings.

This is part 2/2 for training word embeddings. In part 1 we understood the theory behind training word embeddings. In this part, we will code the same in python.

‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ

üìï Complete Code: github.com/Coding-Lane/Traini...

‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ

Timestamps:

0:00 Intro

2:13 Loading Data

3:25 Removing stop words and tokenizing

5:11 Creating Bigrams

7:37 Creating Vocabulary

9:29 One-hot Encoding

14:41 Model

19:35 Checking results

21:57 Useful Tips

‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ

Follow my entire playlist on Recurrent Neural Network (RNN) :

üìï RNN Playlist: ‚Ä¢ What is Recurrent Neur...

‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ

✔ CNN Playlist: • What is CNN in deep le...

✔ Complete Neural Network: • How Neural Networks wo...

✔ Complete Logistic Regression Playlist: • Logistic Regression Ma...

✔ Complete Linear Regression Playlist: • What is Linear Regress...

‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ‚ûñ

If you want to ride on the Lane of Machine Learning, then Subscribe ‚ñ∂ to my channel here: / @codinglane

Super good explanation. Very indepth insights given. Thank you for taking time and explaining it

When I see 13k subscribers; 2.5k views and only 85 likes I see why good programmers and good data scientists are rare. You can't get a more concise hands on presentation that teaches you how to create a language model.

Hehe‚Ķ really appreciate your comment ü•∫

good and understandable explanation. It would be great if you may upload a video wherein character level embeddings are also learnt where we may split a word in characters- and do the process.

I really liked. Wonderful tutorial.

Thank you for the video as well as the whole code üòä You could set a random seed to obtain always the same randomly initialized weights.

Thank you for your teaching

Thanks jay, the whole playlist is awesome.. Thank you so much for the creating these wonderful videos and educating us...

You’re welcome

Am happy that I found this channel. You have a gift of teaching.

Thank you! It means a lot to me üòá

‚Äã@@CodingLaneHello, I found your video very informative and clear fundamentals. Just a quick question, what if I have a corpus of total 37million sentences and 150 thousand unique words. How do I train my model without going into complexity of having 150thousand nodes in input for one_hot encoding?

thanks i really enjoy the playlist

Very Informative. Thanks for doing this.

Glad to help üòá

When creating the bigrams (for each of two adjacent words), the order should matter. But why you insert all the possible combinations in the bigrams list?

I think the order of the words as it appear in the corpus is important to capture the relationship between adjacent words.

wonderful explanation, please attach your linkedin profile in YT about section

unique_words = set(filtered_data.flat)

thanks , that was very useful

You're welcome!

man you are doing really well. you stopped uploading videos on machine learning algorithms. when will u resume bro.

Hi Pavan, sorry for not uploading videos. I will upload the next video very soon in the upcoming few days. But might take some time to upload more other videos. Thanks for supporting the channel. And I am glad you find my channel useful üôÇ

Hi Jay "Don't laugh at me, I have always been programing of a external compiler (Java / Matlab) - After launching your github template, the jupyter blank code is not in edit mode, what must I do

How do you make a prediction after u trained your own model ?

Hello, sir, and same to you. Thank you for this video, I show you the same. India is now Bhaarat Aatmanirbhar and have popular searching engines looking for talent to engage! Indian is in need of popular searching engines and any help is good.

Glad to help üòá

hello bro, when we apply cosine similarity function?

Is this feasible for a very large size vocabulary?

King is appearing as a target word twice so two vectors will be created for king ? And then we take average????

You never stated what Python packages are needed to do the creation of the bigrams, the tokenization, and the removal of stopwords. Are you using NLTK?

He coded his own function. See the whole code in Jupiter notebook provided in the description

You are using wrong weights for plotting. weights[0] refers to the weights of the first layer, weights[1] refers to the biases of the first layer, weights[2] refers to the weights of the second layer, and weights[3] refers to the biases of the second layer. You can confirm this by running the following code:

for layer in model.layers:

print(layer.get_config(), layer.get_weights())

Alternatively, you can also use the following where the index [1] refers to the second layer (layer index starts from 0) and the second index [0] refers to weights.

weights = model.layers[1].get_weights()[0]

is this video word2vec from scratch

Yes

Can you please give a private mentor support? Paid service

Hi Ali, I would love to… but currently I am jamm packed in my schedule, so won’t have time to give private mentorship.

@@CodingLane it is just one our, questions about multivariate linear regression.

@@alidakhil3554 Alright, can you msg me on whatsapp/mail?

@@CodingLane could you please share your contact info? Thanks